[ad_1]

Technical SEO has certainly fallen out of fashion somewhat with the rise of content marketing, and rightly so.

Content marketing engages and delivers real value to the users, and can put you on the map, putting your brand in front of far more eyeballs than fixing a canonical tag ever could.

While content is at the heart of everything we do, there is a danger that ignoring a site’s technical set-up and diving straight into content creation will not deliver the required returns. Failure to properly audit and resolve technical concerns can disconnect your content efforts from the benefits it should be bringing to your website.

The following eight issues need to be considered before committing to any major campaign:

1. Not hosting valuable content on the main site

For whatever reason, websites often choose to host their best content off the main website, either in subdomains or separate sites altogether. Normally this is because it is deemed easier from a development perspective. The problem with this? It’s simple.

If content is not in your main site’s directory, Google won’t treat it as part of your main site. Any links acquired on subdomains will not be passed to the main site in the same way as if it was in a directory on the site.

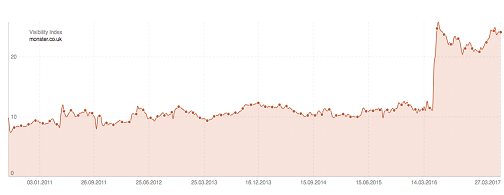

Sistrix posted this great case study on the job site Monster, who recently migrated two subdomains into their main site and saw an uplift of 116% visibility in the UK. The chart speaks for itself:

We recently worked with a client who came to us with a thousand referring domains pointing towards a blog subdomain. This represented one third of their total referring domains. Can you imagine how much time and effort it would take to build one thousand referring domains?

The cost of migrating content back into the main site is miniscule in comparison to earning links from one thousand referring domains, so the business case was simple, and the client saw a sizeable boost from this.

2. Not making use of internal links

The best way to get Google to spider your content and pass equity between sections of the website is through internal links.

I like to look at a website’s link equity as a heat which flows through the site through its internal links. Some pages are linked to more liberally and so are really hot; other pages are pretty cold, only getting heat from other sections of the site. Google will struggle to find and rank these cold pages, which massively limits their effectiveness.

Let’s say you’ve created an awesome bit of functional content around one of the key pain points your customers experience. There’s loads of search volume in Google and your site already has a decent amount of authority so you expect to gain visibility for this immediately, but you publish the content and nothing happens!

You’ve hosted your content in some cold directory miles away from anything that is regularly getting visits and it’s suffering as a result.

This works both ways, of course. Say you have a page with lots of external links pointing to it, but no outbound internal links – this page will be red hot, but it’s hoarding the equity that could be used elsewhere on the site.

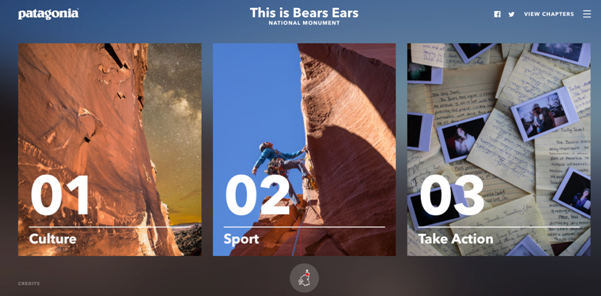

Check out this awesome bit of content created about Bears Ears national park:

Ignoring the fact this has broken rule No.1 and is on a subdomain, it’s pretty cool, right?

Except they’ve only got a single link back to the main site, and it is buried in the credits at the bottom of the page. Why couldn’t they have made the logo a link back to the main site?

You’re probably going to have lots of pages on content which are great magnets for links, but what is more than likely is that these are probably not your key commercial pages. You want to ensure relevant links are included between hot pages and key pages.

One final example of this is the failure to break up paginated content with category or tag pages. At Zazzle Media we’ve got a massive blog section which, at the time of writing, has 49 pages of paginated content! Link equity is not going to be passed through 49 paginated pages to historic blog posts.

To get around this we included links to our blog posts from our author pages which are linked to from a page in the main navigation:

This change allows our blog posts to be within three clicks of the homepage, thus getting passed vital link equity.

Another way around this would be with the additional tag or category pages for the blog – just make sure these pages do not cannibalize other sections of the site!

3. Poor crawl efficiency

Crawl efficiency is a massive issue we see all the time, especially with larger sites. Essentially Google only has a limited amount of pages it will crawl on your site at any one time. Once it has exhausted its budget it will move on and return at a later date.

If your website has an unreasonably large amount of URLs then Google may get stuck crawling unimportant areas of your website, while failing to index new content quickly enough.

The most common cause of this is an unreasonably large number of query parameters being crawlable.

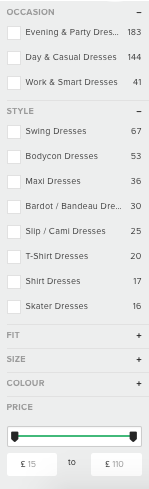

You might see the following parameters working on your website:

https://www.example.com/dresses

https://www.example.com/dresses?category=maxi

https://www.example.com/dresses?category=maxi&colour=blue

https://www.example.com/dresses?category=maxi&size=8&colour=blue

Functioning parameters are rarely search friendly. Creating hundreds of variations of a single URL for engines to crawl individually is one big crawl budget black hole.

River Island’s faceted navigation creates a unique parameter for every combination of buttons you can click:

This creates thousands of different URLs for each category on the site. While they have implemented canonical tags to specify which pages they want in the index, this does not specify which pages are to be crawled, and much of their crawl budget will be wasted on this.

Google have released their own guidelines on how to properly implement faceted navigation, which is certainly worth a read.

As a rule of thumb though, we recommend blocking these parameters from being crawled, either through marking the links themselves with a nofollow attribute, or using the robots.txt or the parameter tool within Google Search Console.

All priority pages should be linked to elsewhere anyway, not just the faceted navigation. River Island have already done this part:

Another common cause of crawl inefficiency arises from having multiple versions of the website accessible, for example:

https://www.example.com

http://www.example.com

https://example.com

http://example.com

Even if the canonical tag specifies the first URL as our default, this isn’t going to stop search engines from crawling other versions of the site if they are accessible. This is certainly pertinent if other versions of the site have a lot of backlinks.

Keeping all versions of the site accessible can make four versions of a page crawlable, which will kill your crawl budget. Rule redirects should be setup to redirect any request and the non-canonicalization version of the page to 301 redirect to the preferred version in a single step.

One final example of wasted crawl efficiency is broken or redirected internal links. We once had a client query the amount of time it was taking for content in a certain directory to get indexed. From crawling the directory, we realised instantly that every single internal link within the directory was pointing to a version of the page not appended with a trailing slash, and then a redirect was forcing the trailing slash on.

Essentially for every link followed, two pages were requested. While broken and redirected internal links are not a massive priority for most sites, as the resource required to fix them does not outweigh the benefit, it is certainly worth resolving priority issues (such as issues from links within the main navigation, or in our case entire directories of redirecting links) especially if you have a problem with the speed with which your content is being indexed.

Just imagine if your site had all three issues! Infinite functioning parameters on four separate sites, all with double the amount of pages requested!

4. Large amounts of thin content

In the post Google Panda world we live in, this really is a no brainer. If your website has large amounts of thin content pages, then sprucing up one page on your website with 10x better content is not going to be sufficient to hide the deficiencies your website already has.

The Panda algorithm essentially makes a score of your website based upon the amount of unique, valuable content you have. Should the majority of the pages not meet the minimum score required to be deemed valuable, your rankings will plummet.

While everyone wants the next big viral idea on their website, when doing our initial content audit, it’s more important to look at the current content on the site and ask the following questions: Is it valuable? Is is performing? If not, can it be improved to serve a need? Removal of content may be required for pages which cannot be improved.

Content hygiene is more important initially than the “big hero” ideas, which come at a later point within the relationship.

5. Large amounts of content with overlaps in keyword targeting

We still see websites making this mistake in 2017. For example, if our main keyword is blue widgets and is being targeted on a service page, we might want to make a blog post about blue widgets too! Because it’s on our main service offering, let’s put a blurb on our homepage about blue widgets. Oh, and of course, you also have a features of our blue widgets page.

No! Just stop, please! The rule of one keyword per page has been around for nearly as long as SEO, but we still see this mistake being made.

You should have one master hub page which contains all the top line information about the topic your keyword is referencing.

You should only utilize other pages should there be significant search volume around long tail variations of the term, and on these pages target the long tail keyword and the long tail keyword only.

Then link prominently between your main topic page and your long-tail pages.

If you have any additional pages which do not provide any search benefit, such as a features page, then consider consolidating the content onto the hub page, or preventing this page from being indexed with a meta robots noindex attribute.

So, for example, we’ve got our main blue widgets page, and from it we link out to a blog post on the topic of why blue widgets are better than red widgets. Our blue widgets feature page has been removed from the index and the homepage has been de-optimized for the term.

6. Lack of website authority

But content marketing helps attract authority naturally, you say! Yes, this is 100% true, but not all types of content marketing do. At Zazzle Media, we’ve found the best ROI on content creation is the evergreen, functioning content which fulfils search intent.

When we take a new client on board we do a massive keyword research project which identifies every possible long tail search around the client’s products and services. This gives us more than enough content ideas to go about bringing in at the top of the funnel traffic that we can then try to strategically push down the funnel through the creative use of other channels.

The great thing about this tactic is that it requires no promotion. Once it becomes visible in search, it brings in traffic regularly without any additional budget.

One consideration before undergoing this tactic is the amount of authority a website already has. Without a level of authority, it is very difficult to get a web page to rank for anything well, no matter the content.

Links still matter in 2017. While brand relevancy is the new No.1 ranking factor (certainly for highly competitive niches), links are still very much No. 2.

Without an authoritative website, you may have to step back from creating informational content for search intent, and instead focus on more link-bait types of content.

7. Lack of Data

Without data it is impossible to make an informed decision about the success of your campaigns. We use a wealth of data to make informed decisions prior to creating any piece of content, then use a wealth of data to measure our performance against those goals.

Content needs to be consumed and shared, customers retained and engaged.

Keyword tools like Storybase will provide loads of long tail keywords with which to base your content on. Ahrefs content explorer can help validate content ideas by comparing the performance of similar ideas.

I love also using Facebook page insights on custom audiences (by website traffic or email list) to extract vital information about our customer demographic.

Then there is Google Analytics.

Returning visits, pages per session, measure customer retention.

Time on page, exit rate and social shares can measure the success of the content.

Number of new users and bounce rate is a good indication of the engagement of new users.

If you’re not tracking the above metrics you might be pursuing a method which simply does not work. What’s worse, how can you build on your past successes?

8. Slow page load times

This one is a no brainer. Amazon estimated that a single second increase to their page load times would cost them $1.6 billion in sales. Google have published videos, documents and tools to help webmasters address page load issues.

I see poor page load times as a symptom of a much wider problem; that the website in question clearly hasn’t considered the user at all. Why else would they neglect probably the biggest usability factor?

These websites typically tend to be clunky, have little value and what content they do have is hopelessly self-serving.

Striving to resolve page speed issues is a commitment to improving the experience a user has of your website. This kind of mentality is crucial if you want to build an engaged user base.

Some, if not all, of these topics justify their own blog post. The overriding message from this post is about maximising a return of investment for your efforts.

Everyone wants the big bang idea, but most aren’t ready for it yet. Technical SEO should be working hand in hand with content marketing efforts, letting you eke out the maximum ROI your content deserves.

Related reading

Michael Bertini, Online Marketing Consultant and Search Strategist at iQuanti, told Search Engine Watch why he thinks that Google has gone off half-cocked with Posts, and why marketers would be better off expending their energies elsewhere.

According to digital marketing expert Jordan Kasteler, 1 in 3 of all Google searches has local intent. This means users search for and expect local information in SERPs, and now more than ever, priority should be given to optimizing on-site and off-site strategies for local SEO.

For the past five to six months the search industry has been buzzing with talk around Google’s mobile-first index. In the midst of this noise, it is very easy to get lost with what you actually need to know about the update.

A new study commissioned by Microsoft’s Bing and search agency Catalyst may have some light to shed onto why many marketers aren’t realizing the full potential of search.

[ad_2]

Source link